State |

Final |

Date |

2008-11-03 |

Proposed by |

Ichthyostega |

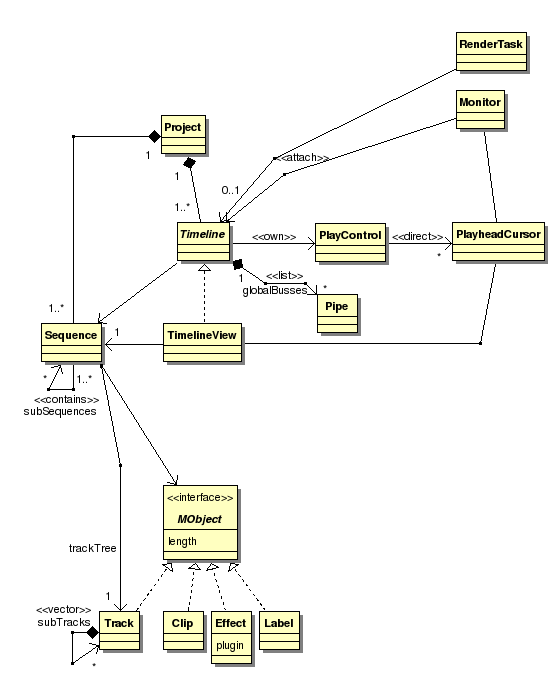

Relation of Project, Timeline(s), Sequence(s) and Output generation

In the course of our discussions it meanwhile became clear, that Lumiera will show multiple timeline-like views within one project. Furthermore, support for nested Sequences as meta-clips is considered an essential goal. The purpose of this entry is to try to settle on some definitions and clarify the relationships between these concepts.

Definitions

- Project

-

the top-level context in which all edit work is done over an extended period of time. The Project can be saved and re-opened. It is comprised of the collection of all things the user is working on, it contains all informations, assets, state and objects to be edited.

- Session

-

the current in-memory representation of the Project when opened within an instance of Lumiera. This is an implementation-internal term. For the GUI and the users POV we should always prefer the term »Project« for the general concept.

- Timeline

-

the top level element within the Project. It is visible within a timeline view in the GUI and represents the effective (resulting) arrangement of media objects, resolved to a finite time axis, to be rendered for output or viewed in a Monitor (viewer window). Timeline(s) are top-level and may not be further combined. A timeline is comprised of:

-

a time axis in absolute time

(WIP: not clear if this is an entity or just a conceptual definition) -

a PlayController

-

a list of global Pipes representing the possible outputs (master busses)

-

exactly one top-level Sequence, which in turn may contain further nested Sequences

-

- Timeline View

-

a view in the GUI featuring a given timeline. There might be multiple views of the same timeline, all sharing the same

PlayController. A proposed extension is the ability to focus a timeline view to a sub-Sequence contained within the top-level sequence of the underlying Timeline. (Intended for editing meta-clips) - Sequence

-

A collection of MObjects placed onto a Fork (tree of tracks). (the “Sequence” entity was former named EDL — an alternative name would be Arrangement ). By means of this placement, the objects could be anchored relative to each other, relative to external objects, absolute in time. Placement and routing information can be inherited down the fork (track tree), and missing information is filled in by configuration rules. This way, a sequence can connect to the global pipes when used as top-level sequence within a timeline, or alternatively it can act as a virtual-media when used within a meta-clip (nested sequence). In the default configuration, a Sequence contains just a simple fork (root track) without nested sub-forks and sends directly to the master busses of the timeline.

- Pipe

-

the conceptual building block of the high-level model. It can be thought of as simple linear processing chain. A stream can be sent to a pipe, in which case it will be mixed in at the input, and you can plug the output of a pipe to another destination. Further, effects or processors can be attached to the pipe. Besides the global pipes (busses) in each Timeline, each clip automatically creates N pipes (one for each distinct content stream, i.e. normally N=2, namely video and audio)

- PlayController

-

coordinating playback, cueing and rewinding of a PlayheadCursor (or multiple in case there are multiple views and or monitors), and at the same time directing a render process to deliver the media data needed for playback. Actually, the implementation of the PlayController(s) is assumed to live in the application core.

- RenderTask

-

basically a

PlayController, but collecting output directly, without moving aPlayheadCursor(maybe a progress indicator) and not operating in a timed fashion, but freewheeling or in background mode - Monitor/Viewer

-

a viewer window to be attached to a timeline. When attached, a monitor reflects the state of the timeline’s

PlayController, and it attaches to the Timeline’s global pipes by stream-type match, showing video as monitor image and sending audio to the system audio port (Alsa or Jack). Possible extensions are for a monitor to be able to attach to probe points within the render network, to show a second stream as (partial) overlay for comparison, or to be collapsed to a mere control for sending video to a dedicated monitor (separate X display or firewire)

Relations

-

this UML shows the relation of concepts, not so much their implementation

-

within one Project, we may have multiple independent timelines and at the same time we may have multiple views of the same timeline.

-

all playhead displays within different views linked to the same underlying timeline are effectively linked together, as are all GUI widgets representing the same

PlayControllerowned by a single timeline. -

I am proposing to do it this way per default, because it seems to be a best match to the users expectation (it is well known that multiple playback cursors tend to confuse the user)

-

the timeline view is modelled to be a sub-concept of “Timeline” and thus can stand-in. Thus, to start with, for the GUI it doesn’t make any difference if it talks to a timeline view or a timeline.

-

each timeline refers to a (top-level) sequence. I.e. the sequences themselves are owned by the Project, and theoretically it’s possible to refer to the same sequence from multiple timelines directly and indirectly.

-

besides, it’s also possible to create multiple independent timelines — in an extreme case even so when referring to the same top-level sequence. This configuration gives the ability to play the same arrangement in parallel with multiple independent play controllers (and thus independent playhead positions)

-

to complement this possibilities, I’d propose to give the Timeline View the possibility to be focused (re-linked) to a sub-sequence. This way, it would stay connected to the main play control, but at the same time show a sub-sequence in the way it will be treated as embedded within the top-level sequence. This would be the default operation mode when a meta-clip is opened (and showed in a separate tab with such a linked timeline view). The reason for this proposed handling is again to give the user the least surprising behaviour. Because, when — on the contrary — the sub-sequence would be opened as separate timeline, a different absolute time position and a different signal routing may result; doing such should be reserved for advanced use, e.g. when multiple editors cooperate on a single project and a sequence has to be prepared in isolation prior to being integrated in the global sequence (featuring the whole movie).

-

one rather unconventional feature to be noted is that the Tracks (actually a »Fork« ≙ a tree of Tracks) are within the Sequences and not on the level of the global busses as in most other video and audio editors. The rationale is that this allows for fully exploiting the tree-structure, even when working with large and compound projects, it allows for sequences being local clusters of objects including overlays, masks etc. Especially this allows to use a sequence interchangeably as a virtual media and even use it at the same time as the contents of another top-level timeline.

Tasks

-

Interfaces on the Stage and Steam level need to be fully specified. Especially, »Timeline« is now promoted to be a new top-level entity within the Session

-

communication between the PlayController(s) and the GUI need to be worked out

-

the stream type system, which is needed to make this default connection scheme work, currently is just planned and drafted. Doing a exemplarily implementation for GAVL based streams is on my immediate agenda and should help to unveil any lurking detail problems in this design.

-

with the proposed focusing of the timeline view to a sub-sequence, there are dark corner cases to be explored in detail to find out if this is possible; otherwise we’d need a new solution how to edit the embedded sub-sequences

-

of course we need to re-check the intended interactions from the GUI viewpoint (both design and GUI implementation)

Discussion

Pros

-

this design naturally scales down to behave like the expected simple default case: one timeline, one track, simple video/audio out.

-

but at the same time it allows for bewildering complex setups for advanced use

-

separating signal flow and making the fork (“track tree”) local to the sequence solves the problem how to combine independent sub-sequences into a compound session

Cons

-

it is complicated

-

it is partially uncommon, but not fully revolutionary, and thus might be misleading.

-

the control handling in the GUI can become difficult (focus? key shortcuts?)

-

the ability to have both separate timelines and timeline views can be very confusing. We really need to think about suitable UI design

-

because the signal flow is separated from the tracks, we need to re-design the way how common controls (fader, pan, effect UIs) are integrated instead of just using the well-known approach

Alternatives

-

just one Session, a list of Tracks and do not attempt to cover the organisation of larger projects at all.

-

allow only linear Sequences with one Track, not cluster-like sequences

-

make the Tracks synonymous with the global busses as usual. Use an allocation mechanism when “importing” separate sub-projects

-

rather make compound projects a loosely coupled collection of stand-alone projects, which are just “played” in sequence. Avoid nested referrals.

-

don’t build nested structures, rather build one large timeline and provide flexible means for hiding and collapsing parts of it.

Rationale

Obviously, the usual solution was found to be limiting and difficult to work with in larger projects. On the other hand, the goal is not to rely on external project organisation, rather to make Lumiera support more complicated structures without complicated “import/export” rules or the need to create a specific master-document which is different from the standard timeline. The solution presented here seems to be more generic and to require fewer treating of special cases than the conventional approach would be.

Comments

GUI handling could make use of expanded view features like…

-

drop down view of track, that just covers over what was shown below. This may be used for quick precise looks, or simple editions, or clicking on a subtrack to burrow further down.

-

show expanded trackview in new tab. This creates another tabbed view which makes the full window are available for a “magnified” view. It is very easy to jump back to the main track view, or to other view tabs (edit points).

-

The main track view could show highlights for “currently created” views/edit points, and whether they are currently being used or not (active/inactive).

-

Each tab view could show a miniature view of the main track view (similar concept to linux desktop switching), to make it easy to figure out which other tabs to jump to, without having to go back to the main view. This can be a user option as not everybody would need this all of the time.

-

the drop down view could have some icons on the dropdown top bar, which are positioned very close to the point on the track that was clicked on to trigger the drop down. This close proximity means that the mouse motion distance to commonly used (next) options, is very minimal. Icons for common options might include; remove drop down view, create new tab view (active edit point), create edit point (but don’t open a new tab — just create the highlight zone on the track), temporarily “maximise” the drop down view to the full window size (ie show the equivalent tab view in the current window).

-

some of the “matrix type” view methods commonly used in spreadsheets, like lock horizontal and vertical positions (above or below, left or right of marker) for scrolling — this can also be used for determining limits of scroll.

-

monitor view could include a toggle between show raw original track , show zoomed and other camera/projector render, or show full rendering including effects — this ties in with the idea of being able to link a monitor with viewing anywhere in the node system — but is able to be swiftly changed within the monitor view by icons mounted somewhere on each of the respective monitors' perimeter.

-

the trackview itself, could be considered as a subview of a total-timeline-trackview, or some other method of “mapping out” the full project (more than one way of mapping it out may be made as optional/default views).

This set of features is going to be very exciting and convenient to work with — a sort of google earth feature for global sized projects.

- Tree

-

2008-12-19 22:58:30